Project Overview

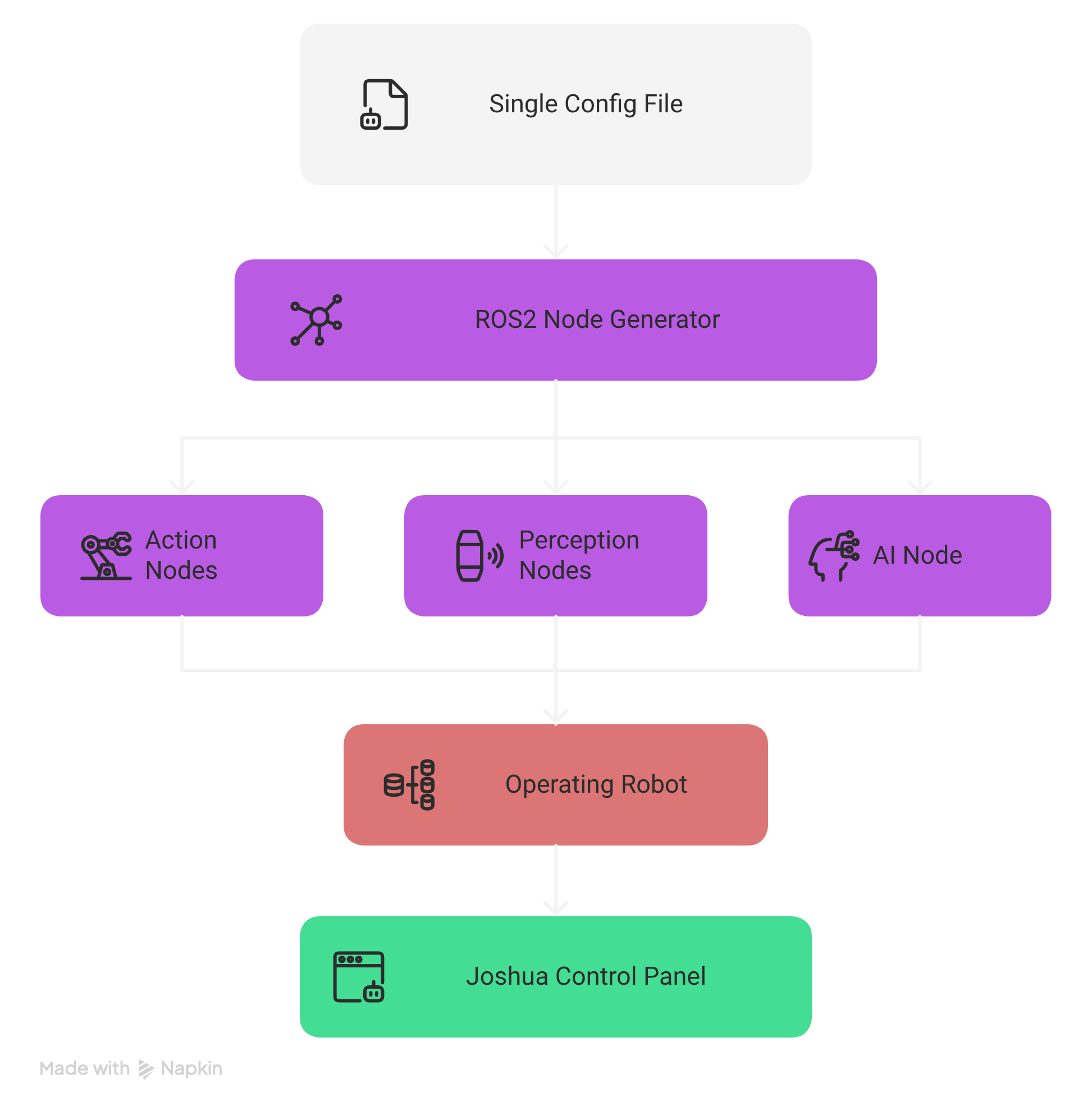

JOSHUA transforms a single configuration file into a fully operational robot system using ROS2, Protocol Buffers, and pluggable AI inference.

Configuration-Driven Design

Define your entire robot system—hardware, sensors, AI models, and operation mode—in a single Protocol Buffers text configuration file. No code changes required to reconfigure the system.

Hardware Abstraction Layer

Plug-and-play support for servo motors (STS3215, Pybricks SPIKE), cameras (OpenCV), encoders, and LiDAR sensors with factory-pattern instantiation and mock drivers for testing.

AI Inference Pipeline

Extensible model architecture supporting SmolVLA (vision-language-action), decision transformers, and custom models. C++/Python interop via PyBind11 for real-time inference on edge devices.

ROS2 Middleware Integration

Built on ROS2 (Humble/Jazzy) with 91 standard message types, configurable QoS profiles, and automatic node lifecycle management through the Node Generator orchestrator.

MuJoCo Simulation

Physics-based simulation with interactive, passive, mirror (digital twin), and offscreen rendering modes. GPU-accelerated RL training via MuJoCo-XLA (MJX) and NVIDIA Isaac Sim integration.

Cross-Platform Deployment

Supports AMD64 and ARM64 (NVIDIA Jetson Orin Nano) with Docker containers for Ubuntu 22.04/24.04. Bazel build system for reproducible, hermetic builds with cross-compilation.